Finished reading: Life in Code by Ellen Ullman 📚

Finished reading: Life in Code by Ellen Ullman 📚

Thinking more about arXiv and public-facing publishing: Back in the day, my mentor was among the first on campus to successfully argue that public-facing publishing should count toward tenure, as service and outreach at minimum. She was on the edge of digital studies, and by the time her work was formally peer-reviewed it risked being irrelevant. At the time, in the early 00s, blogging was the primary mechanism to get the job done, and peer review happened online in real time through interaction with other scholars. This also foreshadowed the “influencer” model that we know today – by cultivating interest and influence through these more informal channels, their formal work gained traction in formal channels.

Do you have what it takes to be the next McDonald’s CEO? A quiz about this week’s funniest business story.

Some notes on arXiv: arXiv is a preprint server hosted and maintained by Cornell University, where researchers post their work to establish priority and get it in front of other researchers quickly. This kind of server is common in emerging fields, to help build a body of shared knowledge among researchers, and fast.

With all the speculation around AI futures, arXiv papers are suddenly hot content on Twitter/X, paired with aggressive forecasting and commentary. This is causing a lot of ruckus among the ranks. arXiv papers are a-okay to reference as sources of current AI research, but I’d flag a couple of things: they’re technical documents written for other researchers, not press releases or news coverage, so the claims in them are often much narrower and more specific than how they get interpreted and reported.

Good and bad uses of AI on a website, from Sumy Designs.

A smart take on nonconsensual deepfakes, considering them not just as a social issue but as a cybersecurity issue, as they’re often connected to broader financial and social harassment campaigns.

Reaching back to 2025 to put this article on the pile of AI commentary: Cottom’s argument here is that AI, for all the breathless hype around it, is a “mid” technology, one that makes modest augmentations to existing processes while its loudest boosters use it to justify employing fewer people and delegitimizing expertise. Around the time the article was published, she supplemented with some additional video commentary worth watching.

She draws on Acemoglu and Restrepo’s concept of “so-so” technologies and traces a pattern from MOOCs to DOGE, where each iteration promises transformation but delivers incremental improvements at best and labor displacement at worst. The real danger, she argues, is that AI’s most compelling use case in the current political environment is threatening, demoralizing workers and justifying cuts, not revolutionizing how work gets done.

Cottom has been one of the writers I keep returning to because she is not dismissing the technology or retreating into reactionary nostalgia. She looks past the product announcements to the political economy underneath them. Who benefits from the hype cycle? What happens to the institutional infrastructure (education, research, public expertise) that AI claims to augment and simultaneously threatens to starve? She’s also very active on Instagram (and promoting a new documentary) and tracing the news around AI and higher ed in real time.

Platformer on the emerging politics of military AI, and the backlash resulting from this week’s activities.

Some content notes: while I haven’t quite figured out how much I want to broadcast this space, I have put a couple of feedback mechanisms into place, including an email loop as well as turning on comments for those logged into micro.blog, Bluesky and Mastodon. If you’re reading along, say hello.

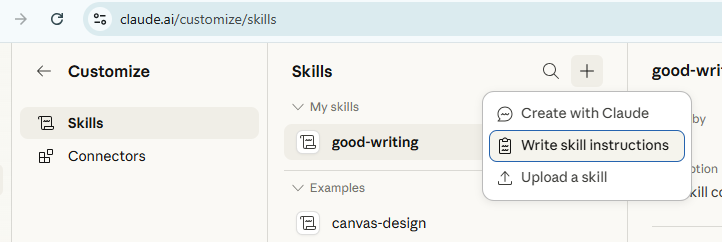

LLMs have a default house writing style with identifiable patterns: sentence fragments for emphasis, “not X, but Y” constructions, lots of hard contrast, atmospheric openings, heavy use of em dashes, and heavy use of marketing language. This reflects the semantic construction of an LLM. Custom instructions can override these defaults. A custom skill is a set of instructions within your account that modify how the model generates text. When you paste instructions into your profile settings, Claude reads them at the start of every conversation and adjusts its output accordingly.

I began using Claude daily for light writing tasks about six months ago, and over that time I started cataloging the patterns I was consistently editing out, including the terrible “not X, but Y” construction that showed up in nearly every response, and persistent em dashes used as all-purpose connectors when other punctuation is more appropriate.

I went through several iterations of bullying Claude into submission, narrowing the scope each time, before arriving at this version, which focuses specifically on writing mechanics and hard prohibitions.

You’ll need a paid Claude plan (Pro, Max, Team, or Enterprise). Free-tier accounts don’t have access to custom skills.

• Within the app, navigate to Customize > Skills and Create new skills

• Select add a new skill and Write skill instructions

• Copy and paste the copy from this file into the skill, making note of the name and description boxes. Feel free to tinker.

• Save your changes.

Note: The instructions in the linked file are Claude’s work, not mine. They came out of months of conversation, where Claude would analyze my style notes, and the file evolved from there. They read a little strangely because of that process. If I’d written them from scratch, they’d sound different. But looking at the file you can see what Claude responds to and how it works.

Claude will apply these instructions to every new conversation going forward. Existing conversations won’t pick up the change, so start a fresh chat to test it. If and when Claude struggles to apply the skill, call it out specifically in the prompt, such as, “Revise this for length using the good writing skill.”

The skill specifies constraints in a few categories and the instructions are plain text. As you go, you can also ask Claude to analyze previous conversations for suggested additions to the skill, which Claude will produce and implement within the chat. Each rule operates independently, so removing one doesn’t affect the others.

Claude processes custom instructions at the start of every conversation, before it generates any output. The instructions function as constraints on the model’s default behavior. The model doesn’t always follow every instruction perfectly and the results vary by task. You will still need to edit.

WaPo on the many issues of LLM house writing styles. I have much to add. Here are some notes on applying a writing skill to override house style on Claude.

This new report from Anthropic is depressing at best, as it tries to measure which employment sectors carry the most exposure around AI expansion into the economy. In short, the tech is likely to impact two groups the hardest: educated professional women, and young workers for whom the career ladder will never materialize. In a right-side-up world, this would change the political dynamics of any policy response considerably. In this one, I don’t know.

Anthropic’s positioning here is curious, very god tricky. They are claiming the mantle of responsibility and transparency while predicting an inevitable end nobody wants, that they’re also selling as a service.

I still think much of the forecasting is oversold – the tech performs well in optimized environments, and last mile issues are a perennial concern in any engineering venture because the practical world is non-optimal. Time will tell, and there are big incentives in play. But the hunger and animus around the forecast feel bad.

A thorough look at the loss of institutional hip-hop media archives and what it means for culture writing today.

NPR’s Code Switch on the gentrification of the Internet.

I have pretty strong feelings against the use of AI around military operations, based on my hands-on experience with the tools. When the tech’s creators say the tech isn’t ripe for warfare, that’s strong feedback. And I agree, with the risks and implications around trust, marketing and hallucinations, it’s not even really ripe for the consumer marketplace, much less drone warfare. Whether or not artificial intelligence tech should be used for war is, of course, at the root of Haraway’s thesis, which we like to noodle with around these parts.

You might call this a taste test: Obsessed with the story about the McDonald’s CEO and how his LinkedIn-style videos selling the McD’s franchise have escaped containment, leading to one of the funnier CEO/product marketing dynamics in recent history. Burger King’s CEO swooped in, holding widely-marketed listening sessions with customers and to demonstrate his love for the Whopper in contrast with the deeply weird McD’s videos. Must read: Internet long-hauler Katie Notopoulos on how direct-to-public marketing works when the public is more familiar with the product than the org’s leaders.

My teenager thinks fast food is cool and subversive (cue the sound of one hundred moms groaning) and she and her friends regularly talk shop. They are Taco Bell fans and think burger stops are kind of gauche. Not gauche: Baja Blast.

In the meantime, the fast food sector is becoming a playground for AI approaches, causing a lot of nervous discussion on social media and in tech spaces.

Following the news on Meta glasses this week and how real people are moderating that content loop, watching and moderating all of our intimate video footage, including our most private moments. Last year, my book club read a speculative fiction novel about this very dynamic called “Moderation” by Elaine Castillo. Recommended.

Ryan Broderick is currently my fav reporter on the Internet culture beat, and on this podcast he’s talking about Epstein and why he was interested in putting his resources to developing the early internet (spoiler: for crimes).

Ruling: AI art is not copyrightable.